This Week in Changelogs: curl

Hey everyone, long time no see!

I started TWiC in 2023, and to be honest, mining diffs manually was exhausting, that's why it faded away pretty quick. Today, with a little bit of LLM and automation, it became much easier to find hidden gems in modern OSS and bring it to the audience. There's another problem though: sometimes there's too many of the gems, and I definitely don't want to re-start a series of boring longreads.

So today, we're gonna cover recent changes in only one project, (arguably) the most popular libary and command-line tool in the world - curl.

Zip bomb protection via delivered-bytes tracking

A zip bomb is when you send a relatively small compressed piece of data, which is automatically decompressed on the client side into a giant blob, causing DoS. One of the ways to protect from it - set a limit on the incoming data size. Curl had an option for it, CURLOPT_MAXFILESIZE, since 2003.

There was one small problem: before 8.20.0 it only affected the amount of downloaded, i.e. compressed, bytes. The good news is that now they calculate delivered, decompressed, bytes separately, extending CURLOPT_MAXFILESIZE to act as a zip bomb protection:

/* check that the 'delta' amount of bytes are okay to deliver to the

application, or return error if not. */

CURLcode Curl_pgrs_deliver_check(struct Curl_easy *data, size_t delta)

{

if(data->set.max_filesize &&

((curl_off_t)delta > data->set.max_filesize - data->progress.deliver)) {

failf(data, "Would have exceeded max file size");

return CURLE_FILESIZE_EXCEEDED;

}

return CURLE_OK;

}

Btw, check out the pull request itself: five AI bots and zero people besides the author (Daniel himself) was involved in the code review! And it actually helps a lot, because, well, check out the next one:

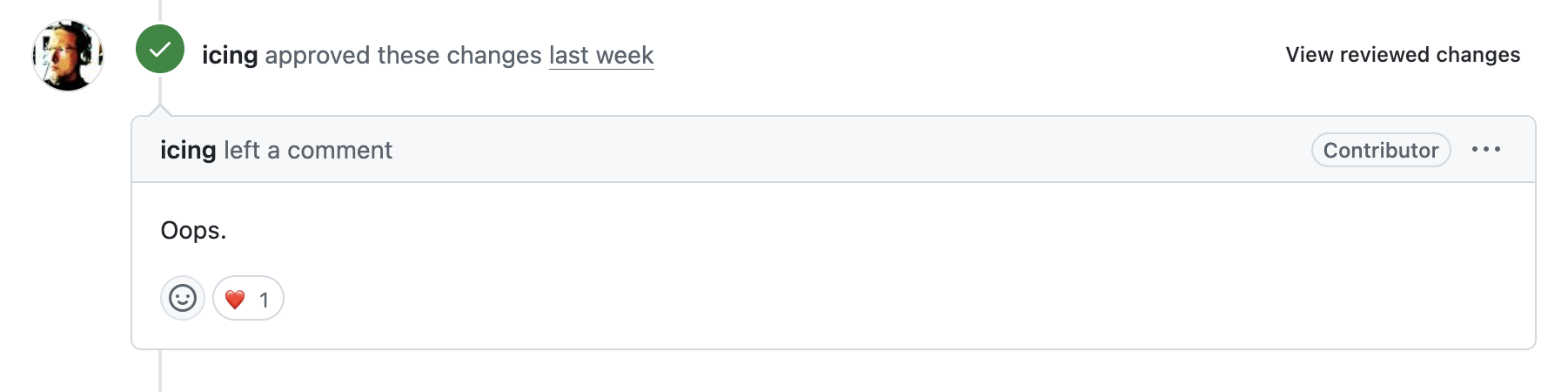

API bitmask copy-paste bug

CURLMNWC_CLEAR_DNS and CURLMNWC_CLEAR_CONNS were both defined as (1L << 0) (or just 1) , so you could never clear just DNS or just connections - always both.

The bug was introduced mid-2025 (PR, diff), and despite 3 people involved as code reviewers and 2 approvals, it has made it into 8.16.0 to 8.19.0:

Luckily, Codex Security found it (although, I suspect a decent static analyzer would find it many years before LLM era), and the author of the original changeset came up with an elegant solution: introduce CURLMNWC_CLEAR_ALL as the new (1L << 0) (since that's what every existing caller was unknowingly doing), and reassigns DNS and CONNS to bits 1 and 2:

if(val & CURLMNWC_CLEAR_ALL)

/* In the beginning, all values available to set were 1 by mistake. We

converted this to mean "all", thus setting all the bits

automatically */

val = CURLMNWC_CLEAR_DNS | CURLMNWC_CLEAR_CONNS;

So yeah, if you can afford involving complex static analyzers or modern AI-reviewers, you'd better do that, because even seasoned lead devs can miss such small issues.

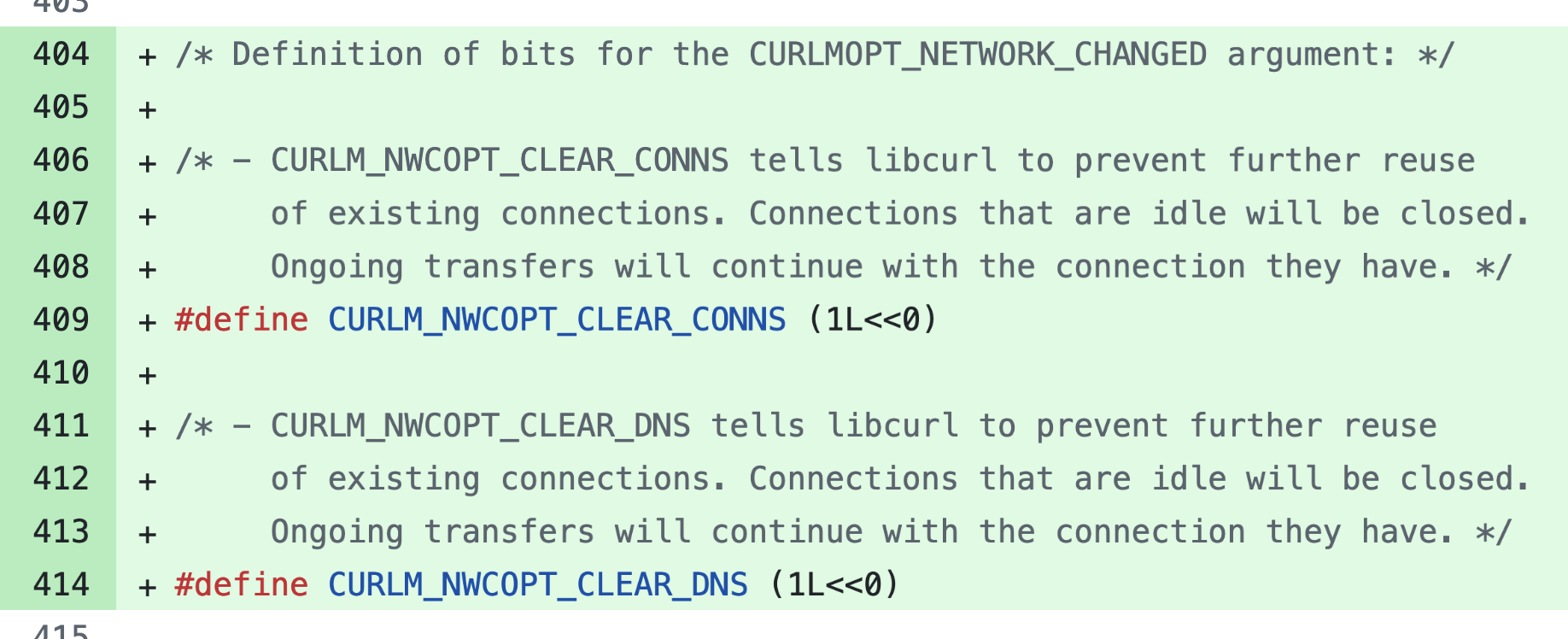

Missing return

Long story short, there's a helper called return_quote_error(), and thanks to its name, there were a lot of places where the actual return was forgot before the call, causing segfaults.

This is a classic case of a fix so small you're 100% sure you're right, skip the review, and just merge broken code:

I personally like that apart from fixing this problem, they removed the return_ prefix from the function name to avoid future confusions. Of course, such problems will also be caught by AI checkers, but here's another story:

PowerPC64 endianness AI-slop

A PR from a user named AutoJanitor with a robot on the avatar and a shitload of contribution since the end of January 2026 added __powerpc64__ to the list of architectures that can use unaligned memory access for MD5/MD4 fast paths with the following description:

Currently the fast path only covers

__i386__,__x86_64__, and__vax__. PowerPC64 (both LE and BE) (sic! - @dima) supports efficient unaligned memory access and should use the same optimization.<...>

Verified on IBM POWER8 S824 (ppc64le) — unaligned 32-bit loads work correctly and produce single

lwzinstructions.

To understand what it means, let's check out the code itself:

/*

* SET reads 4 input bytes in little-endian byte order and stores them

* in a properly aligned word in host byte order.

*

* The check for little-endian architectures that tolerate unaligned memory

* accesses is an optimization. Nothing will break if it does not work.

*/

#if defined(__i386__) || defined(__x86_64__) || \

defined(__vax__) || defined(__powerpc64__)

/* NB ^^^^^^^^^^^^^^^^^^^^^^ */

#define MD4_SET(n) (*(const uint32_t *)(const void *)&ptr[(n) * 4])

/* ... */

So this is an optimization for little-endian architectures, and, as pointed out later, an undefined behaviour.

Anyway, the contribution looked rock-solid, got merged, and a few days later, a real person (the guy has a website with /~sam/, he knows his shit) came into the original PR, a discussion happened, and the commit was reverted.

The thing is that while PowerPC can run in either endianness, the preprocessor check __powerpc64__ doesn't tell you which one. The optimization above is exclusively for little-endian cases (casting byte arrays directly to uint32_t * causing unaligned memory access), so on big-endian PowerPC64 it produced wrong results or just failed.

Unfortunately, AI bots have once again slightly ruined things for Daniel Stenberg. Apparently, that's the fate of popular projects these days ¯\_(ツ)_/¯

Thank you folks, I hope you enjoyed this "episode" of This Week in Changelogs! I hope next time there will be less of AI slop problems and more of something to actually learn from.